Methodology

Why retrospective surveys measure impact more accurately

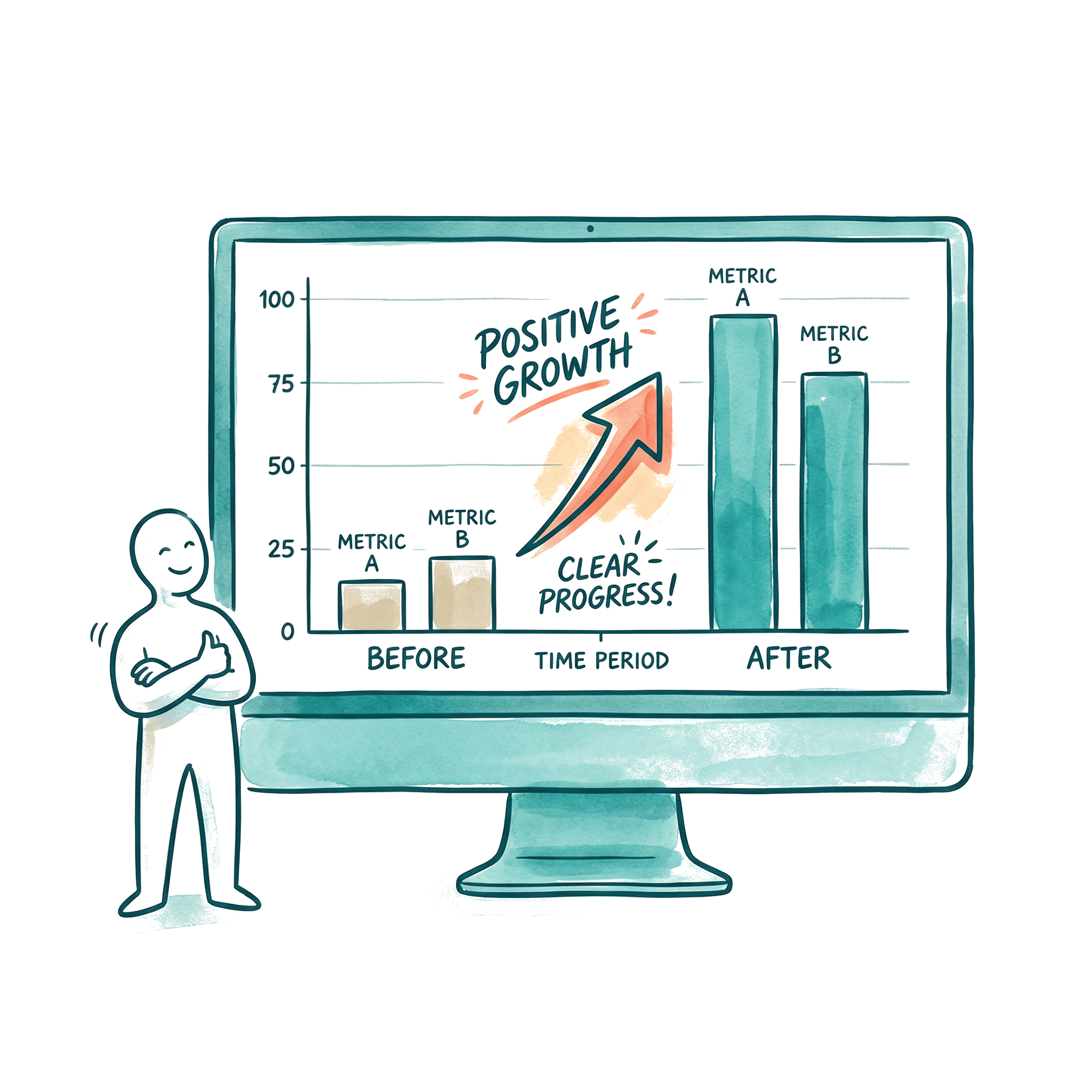

Traditional pre-post surveys have a built-in flaw: participants don't rate themselves consistently before and after a program. Retrospective surveys fix this. Here's how, and why it matters for your evaluation data.